I spent a big part of my teenage years playing a video game called Dota 2, and around the summer of 2019, an artificial intelligence research laboratory called OpenAI unveiled their own bots that they trained for the game’s eSports scene. These bots went on to defeat the then world champions, and I watched that event with a lot of curiosity. At the time I was struggling with what I wanted to do with computer science and what field I wanted to specialize in, and eventually, I realized that the intersection of mathematics, optimization and computer science was something I found really interesting and set to work on it. I was lucky enough to get a summer research job at Colgate where I was going to work on supervised machine learning problems, and since it was the summer right after the pandemic started a lot of the summer courses that were traditionally offered in-person were moved online. I wanted to use this as an opportunity to take some of these courses since I thought I probably would not get another similar opportunity so I enrolled in the Introduction to Artificial Intelligence with Python course at Harvard University. Since the course’s description was explicitly focused on AI and not data analysis, I thought it would help me round off my research’s focus and help prepare me for some of my personal projects which are more in line with the kind of work OpenAI did to train the Dota bots.

Overall, I was very satisfied with the first half of the course which focused on a lot of “old-school” optimization problems and puzzles and games, and algorithms to solve or model them. Some of the stuff we learnt in this part of the course includes graph search algorithms, AB pruning, heuristic-based searches like A* search, propositional and first-order logic, knowledge representation, Markov chains, hill-climbing, simulated annealing, constraint satisfaction problems and so on. I used these techniques and theories to solve puzzles from a wide variety of fields: from simple logic puzzles like Knights to creating crossword puzzles, minesweeper and tic-tac-toe solvers. I found this part of the course to be very interesting, and the projects were just at the right level of difficulty and provided enough incentive for me to go out and check them out on my own if I was interested in more depth.

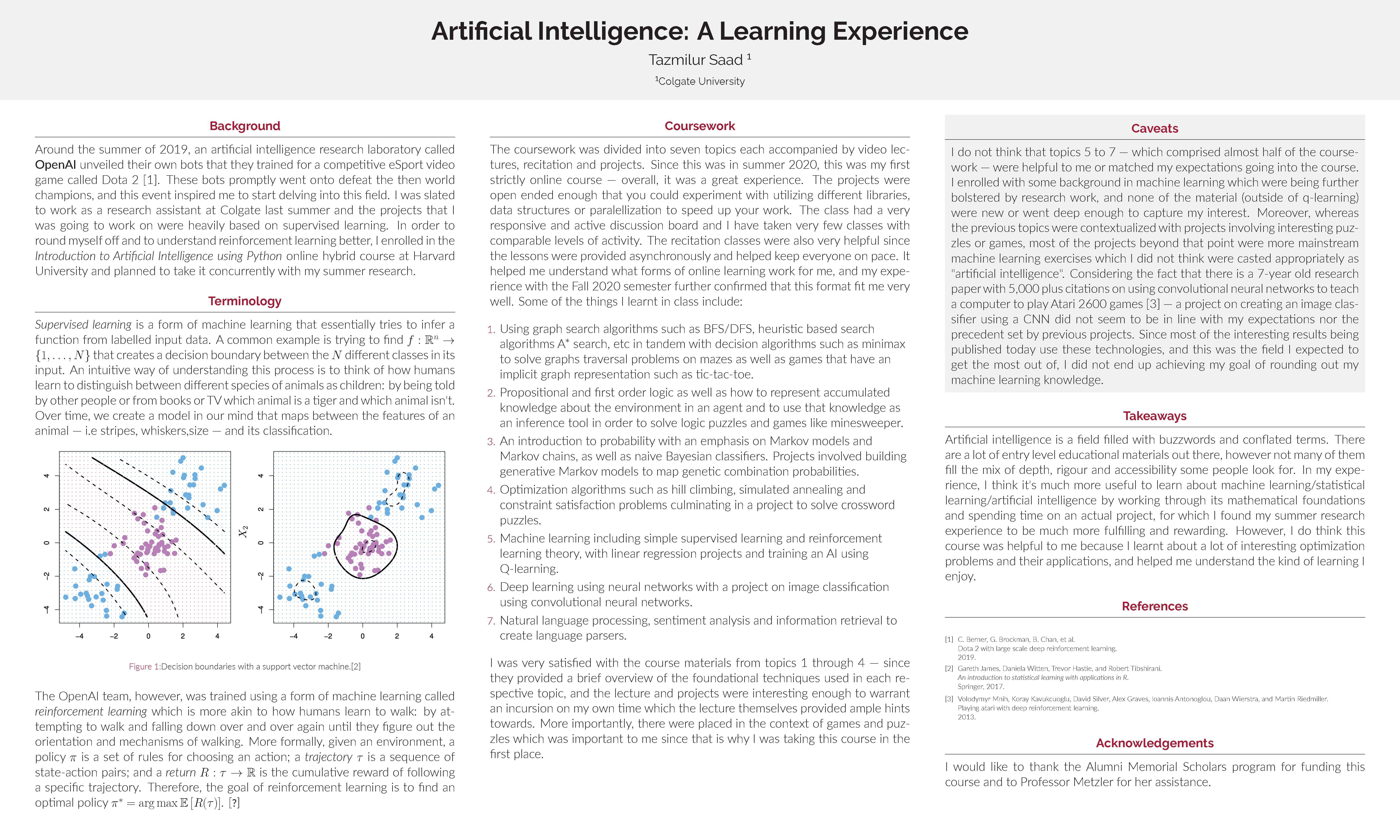

The second part of the course was focused on more “modern” techniques that were essentially different forms of machine learning and their applications: we learnt about simple supervised learning concepts like (multilayer) perceptrons, SVMs, linear/logistic regression; reinforcement learning techniques like Q-learning; neural networks and their different forms (CNN, RNNs) and their applications and a brief look at NLP, sentiment analysis and information retrieval. However, I did not find much of this part to be very interesting, since a lot of the things we were working with were black boxes and there simply wasn’t enough time to go that deep into the mathematical basis for a lot of these topics and I was already exposed to them through prior experience and the research I was undertaking concurrently. More importantly, most of the projects were not contextualised with an emphasis on puzzles or games or anything of the sort -- they seemed to be more akin to mainstream machine learning exercises. Since these were technology people employ today and were used heavily in OpenAI’s and other video game bots which were my reason to join this class in the first place, I did not find this part all that fulfilling or rewarding.

All in all, I found the course to be very interesting and rigorous but the content for half of the course was not what I expected and probably would not have signed up for if I knew that were the case. However, I do consider it an important learning experience since it made me understand what sort of online learning works for me; how to study for and by myself and the importance of reading papers, since that is very important in a field as new and variable as reinforcement learning.